Lung-and-colon-cancer-Classification2 /

inceptionv3-new-paper-code.ipynb

inceptionv3-new-paper-code.ipynb

import os

import time

import shutil

import pathlib

import itertools

from PIL import Image

# Import data handling tools

import cv2

import numpy as np

import pandas as pd

import seaborn as sns

sns.set_style('darkgrid')

import matplotlib.pyplot as plt

from sklearn.model_selection import train_test_split

from sklearn.metrics import classification_report, precision_score, recall_score, f1_score

# Import deep learning libraries

import tensorflow as tf

from tensorflow.keras.models import Sequential

from tensorflow.keras.optimizers import Adam, Adamax

from tensorflow.keras.preprocessing.image import ImageDataGenerator

from tensorflow.keras.layers import Conv2D, MaxPooling2D, Flatten, Dense, Activation, Dropout, BatchNormalization, GlobalAveragePooling2D

from tensorflow.keras import regularizers

from tensorflow.keras.callbacks import ModelCheckpoint, EarlyStopping, ReduceLROnPlateau

from tensorflow.keras.applications import DenseNet121,InceptionV3

from tensorflow.keras.models import Model

# Ignore warnings

import warnings

warnings.filterwarnings("ignore")

print('Modules loaded')

# Generate data paths with labels

data_dir = '/kaggle/input/lung-and-colon-cancer-histopathological-images/lung_colon_image_set'

filepaths = []

labels = []

folds = os.listdir(data_dir)

# Generate paths and labels

for fold in folds:

foldpath = os.path.join(data_dir, fold)

flist = os.listdir(foldpath)

for f in flist:

f_path = os.path.join(foldpath, f)

filelist = os.listdir(f_path)

for file in filelist:

fpath = os.path.join(f_path, file)

filepaths.append(fpath)

if f == 'colon_aca':

labels.append('Colon Adenocarcinoma')

elif f == 'colon_n':

labels.append('Colon Benign Tissue')

elif f == 'lung_aca':

labels.append('Lung Adenocarcinoma')

elif f == 'lung_n':

labels.append('Lung Benign Tissue')

elif f == 'lung_scc':

labels.append('Lung Squamous Cell Carcinoma')

# Concatenate data paths with labels into a DataFrame

df = pd.DataFrame({'filepaths': filepaths, 'labels': labels})

# Split dataset into train, validation, and test sets

train_df, temp_df = train_test_split(df, train_size=0.8, stratify=df['labels'], random_state=42)

valid_df, test_df = train_test_split(temp_df, train_size=0.5, stratify=temp_df['labels'], random_state=42)

# Define image size, channels, and batch size

batch_size = 64

img_size = (224, 224)

channels = 3

img_shape = (img_size[0], img_size[1], channels)

# Create ImageDataGenerator for training and validation

train_datagen = ImageDataGenerator()

valid_datagen = ImageDataGenerator()

train_gen = train_datagen.flow_from_dataframe(train_df, x_col='filepaths', y_col='labels',

target_size=img_size, class_mode='categorical',

batch_size=batch_size, shuffle=True)

valid_gen = valid_datagen.flow_from_dataframe(valid_df, x_col='filepaths', y_col='labels',

target_size=img_size, class_mode='categorical',

batch_size=batch_size, shuffle=True)

test_gen = valid_datagen.flow_from_dataframe(test_df, x_col='filepaths', y_col='labels',

target_size=img_size, class_mode='categorical',

batch_size=batch_size, shuffle=False)

# Get class names

num_classes = len(train_gen.class_indices)

# Define the model

#base_model = DenseNet121(input_shape=img_shape, include_top=False, weights='imagenet')

base_model = InceptionV3(input_shape=img_shape, include_top=False, weights='imagenet')

base_model.trainable = True

x = base_model.output

x = GlobalAveragePooling2D()(x)

x = Dense(256, activation='relu')(x)

predictions = Dense(num_classes, activation='softmax')(x)

model_DenseNet = Model(inputs=base_model.input, outputs=predictions)

# Compile the model

model_DenseNet.compile(optimizer=Adamax(learning_rate=0.001), loss='categorical_crossentropy', metrics=['accuracy'])

# Define callbacks

callbacks = [

ModelCheckpoint(filepath='best_model.keras', monitor='val_loss', save_best_only=True, verbose=1),

EarlyStopping(monitor='val_loss', patience=5, restore_best_weights=True, verbose=1),

ReduceLROnPlateau(monitor='val_loss', factor=0.2, patience=3, min_lr=1e-6, verbose=1)

]

# Helper function to calculate metrics

def calculate_metrics(generator, model):

preds = model.predict(generator)

y_true = generator.classes

y_pred = np.argmax(preds, axis=1)

precision = precision_score(y_true, y_pred, average='weighted')

recall = recall_score(y_true, y_pred, average='weighted')

f1 = f1_score(y_true, y_pred, average='weighted')

return precision, recall, f1

# Train the model and calculate metrics for each epoch

class MetricsCallback(tf.keras.callbacks.Callback):

def on_epoch_end(self, epoch, logs=None):

# Training metrics

train_precision, train_recall, train_f1 = calculate_metrics(train_gen, self.model)

print(f'Epoch {epoch+1} Training Precision: {train_precision:.4f}, Recall: {train_recall:.4f}, F1 Score: {train_f1:.4f}')

# Validation metrics

val_precision, val_recall, val_f1 = calculate_metrics(valid_gen, self.model)

print(f'Epoch {epoch+1} Validation Precision: {val_precision:.4f}, Recall: {val_recall:.4f}, F1 Score: {val_f1:.4f}')

# Measure training time

start_time = time.time()

# Train the model with the custom metrics callback

history = model_DenseNet.fit(train_gen, validation_data=valid_gen, epochs=20, callbacks=[MetricsCallback()] + callbacks)

end_time = time.time()

training_time = end_time - start_time

print(f'Total Training Time: {training_time:.2f} seconds')

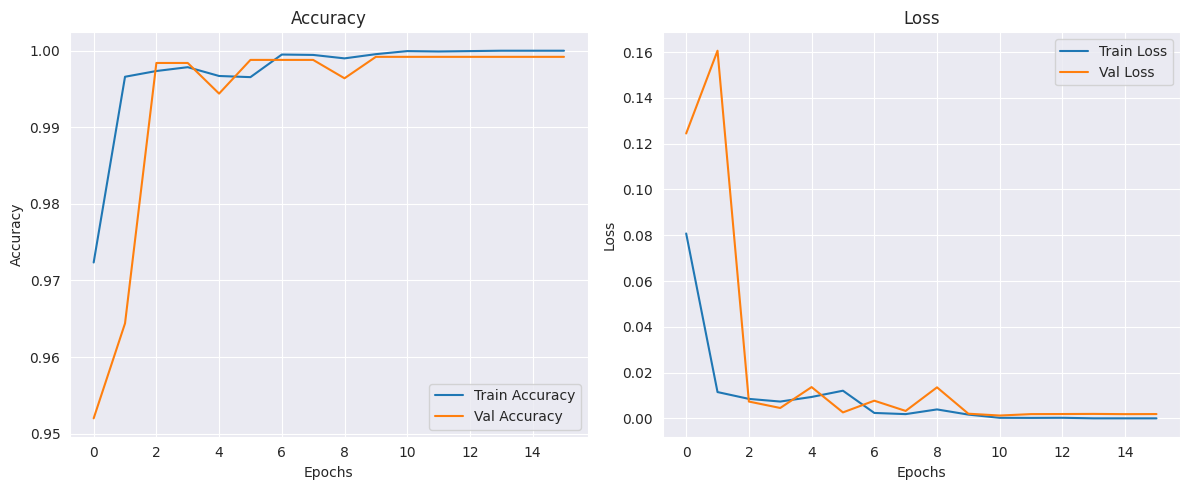

# Plot training history (accuracy and loss)

plt.figure(figsize=(12, 5))

# Plot accuracy

plt.subplot(1, 2, 1)

plt.plot(history.history['accuracy'], label='Train Accuracy')

plt.plot(history.history['val_accuracy'], label='Val Accuracy')

plt.title('Accuracy')

plt.xlabel('Epochs')

plt.ylabel('Accuracy')

plt.legend()

# Plot loss

plt.subplot(1, 2, 2)

plt.plot(history.history['loss'], label='Train Loss')

plt.plot(history.history['val_loss'], label='Val Loss')

plt.title('Loss')

plt.xlabel('Epochs')

plt.ylabel('Loss')

plt.legend()

plt.tight_layout()

plt.show()

# Measure testing time

start_time = time.time()

# Evaluate on the test set

test_loss, test_acc = model_DenseNet.evaluate(test_gen)

end_time = time.time()

testing_time = end_time - start_time

print(f'Test Accuracy: {test_acc:.4f}')

print(f'Total Testing Time: {testing_time:.2f} seconds')

# Final metrics on the test set

test_precision, test_recall, test_f1 = calculate_metrics(test_gen, model_DenseNet)

print(f'Test Precision: {test_precision:.4f}, Recall: {test_recall:.4f}, F1 Score: {test_f1:.4f}')

Modules loaded

Found 20000 validated image filenames belonging to 5 classes.

Found 2500 validated image filenames belonging to 5 classes.

Found 2500 validated image filenames belonging to 5 classes.

Downloading data from https://storage.googleapis.com/tensorflow/keras-applications/inception_v3/inception_v3_weights_tf_dim_ordering_tf_kernels_notop.h5

[1m87910968/87910968[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m4s[0m 0us/step

Epoch 1/20

WARNING: All log messages before absl::InitializeLog() is called are written to STDERR

I0000 00:00:1727877360.012647 68 service.cc:145] XLA service 0x7edf98003240 initialized for platform CUDA (this does not guarantee that XLA will be used). Devices:

I0000 00:00:1727877360.012710 68 service.cc:153] StreamExecutor device (0): Tesla P100-PCIE-16GB, Compute Capability 6.0

WARNING: All log messages before absl::InitializeLog() is called are written to STDERR

I0000 00:00:1727877397.680112 68 asm_compiler.cc:369] ptxas warning : Registers are spilled to local memory in function 'loop_multiply_fusion_18', 12 bytes spill stores, 24 bytes spill loads

I0000 00:00:1727877397.744461 68 device_compiler.h:188] Compiled cluster using XLA! This line is logged at most once for the lifetime of the process.

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m104s[0m 315ms/step

Epoch 1 Training Precision: 0.2040, Recall: 0.2037, F1 Score: 0.2032

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m14s[0m 343ms/step

Epoch 1 Validation Precision: 0.2062, Recall: 0.2076, F1 Score: 0.2058

Epoch 1: val_loss improved from inf to 0.12445, saving model to best_model.keras

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m445s[0m 1s/step - accuracy: 0.9367 - loss: 0.1787 - val_accuracy: 0.9520 - val_loss: 0.1244 - learning_rate: 0.0010

Epoch 2/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m91s[0m 291ms/step

Epoch 2 Training Precision: 0.1986, Recall: 0.1986, F1 Score: 0.1983

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m12s[0m 288ms/step

Epoch 2 Validation Precision: 0.2071, Recall: 0.2068, F1 Score: 0.2063

Epoch 2: val_loss did not improve from 0.12445

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m213s[0m 676ms/step - accuracy: 0.9966 - loss: 0.0111 - val_accuracy: 0.9644 - val_loss: 0.1606 - learning_rate: 0.0010

Epoch 3/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m93s[0m 298ms/step

Epoch 3 Training Precision: 0.1979, Recall: 0.1979, F1 Score: 0.1979

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m12s[0m 290ms/step

Epoch 3 Validation Precision: 0.2032, Recall: 0.2032, F1 Score: 0.2032

Epoch 3: val_loss improved from 0.12445 to 0.00737, saving model to best_model.keras

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m220s[0m 698ms/step - accuracy: 0.9971 - loss: 0.0106 - val_accuracy: 0.9984 - val_loss: 0.0074 - learning_rate: 0.0010

Epoch 4/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m94s[0m 300ms/step

Epoch 4 Training Precision: 0.1982, Recall: 0.1981, F1 Score: 0.1982

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m11s[0m 285ms/step

Epoch 4 Validation Precision: 0.2028, Recall: 0.2028, F1 Score: 0.2028

Epoch 4: val_loss improved from 0.00737 to 0.00456, saving model to best_model.keras

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m218s[0m 690ms/step - accuracy: 0.9976 - loss: 0.0076 - val_accuracy: 0.9984 - val_loss: 0.0046 - learning_rate: 0.0010

Epoch 5/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m95s[0m 304ms/step

Epoch 5 Training Precision: 0.1999, Recall: 0.1998, F1 Score: 0.1999

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m12s[0m 293ms/step

Epoch 5 Validation Precision: 0.2080, Recall: 0.2080, F1 Score: 0.2080

Epoch 5: val_loss did not improve from 0.00456

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m218s[0m 690ms/step - accuracy: 0.9975 - loss: 0.0071 - val_accuracy: 0.9944 - val_loss: 0.0137 - learning_rate: 0.0010

Epoch 6/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m95s[0m 303ms/step

Epoch 6 Training Precision: 0.1996, Recall: 0.1996, F1 Score: 0.1996

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m12s[0m 298ms/step

Epoch 6 Validation Precision: 0.1988, Recall: 0.1988, F1 Score: 0.1988

Epoch 6: val_loss improved from 0.00456 to 0.00263, saving model to best_model.keras

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m219s[0m 692ms/step - accuracy: 0.9972 - loss: 0.0099 - val_accuracy: 0.9988 - val_loss: 0.0026 - learning_rate: 0.0010

Epoch 7/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m94s[0m 299ms/step

Epoch 7 Training Precision: 0.1975, Recall: 0.1975, F1 Score: 0.1975

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m11s[0m 286ms/step

Epoch 7 Validation Precision: 0.2052, Recall: 0.2052, F1 Score: 0.2052

Epoch 7: val_loss did not improve from 0.00263

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m215s[0m 681ms/step - accuracy: 0.9996 - loss: 0.0026 - val_accuracy: 0.9988 - val_loss: 0.0077 - learning_rate: 0.0010

Epoch 8/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m96s[0m 308ms/step

Epoch 8 Training Precision: 0.2022, Recall: 0.2021, F1 Score: 0.2022

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m12s[0m 291ms/step

Epoch 8 Validation Precision: 0.2024, Recall: 0.2024, F1 Score: 0.2024

Epoch 8: val_loss did not improve from 0.00263

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m218s[0m 690ms/step - accuracy: 0.9996 - loss: 0.0013 - val_accuracy: 0.9988 - val_loss: 0.0033 - learning_rate: 0.0010

Epoch 9/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m93s[0m 296ms/step

Epoch 9 Training Precision: 0.1976, Recall: 0.1976, F1 Score: 0.1976

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m11s[0m 271ms/step

Epoch 9 Validation Precision: 0.1872, Recall: 0.1872, F1 Score: 0.1872

Epoch 9: val_loss did not improve from 0.00263

Epoch 9: ReduceLROnPlateau reducing learning rate to 0.00020000000949949026.

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m214s[0m 676ms/step - accuracy: 0.9996 - loss: 0.0018 - val_accuracy: 0.9964 - val_loss: 0.0136 - learning_rate: 0.0010

Epoch 10/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m93s[0m 297ms/step

Epoch 10 Training Precision: 0.2029, Recall: 0.2029, F1 Score: 0.2029

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m11s[0m 283ms/step

Epoch 10 Validation Precision: 0.1996, Recall: 0.1996, F1 Score: 0.1996

Epoch 10: val_loss improved from 0.00263 to 0.00206, saving model to best_model.keras

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m213s[0m 676ms/step - accuracy: 0.9987 - loss: 0.0034 - val_accuracy: 0.9992 - val_loss: 0.0021 - learning_rate: 2.0000e-04

Epoch 11/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m93s[0m 297ms/step

Epoch 11 Training Precision: 0.1991, Recall: 0.1991, F1 Score: 0.1991

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m11s[0m 276ms/step

Epoch 11 Validation Precision: 0.1908, Recall: 0.1908, F1 Score: 0.1908

Epoch 11: val_loss improved from 0.00206 to 0.00126, saving model to best_model.keras

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m214s[0m 678ms/step - accuracy: 1.0000 - loss: 2.5278e-04 - val_accuracy: 0.9992 - val_loss: 0.0013 - learning_rate: 2.0000e-04

Epoch 12/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m96s[0m 305ms/step

Epoch 12 Training Precision: 0.1983, Recall: 0.1983, F1 Score: 0.1983

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m12s[0m 297ms/step

Epoch 12 Validation Precision: 0.1808, Recall: 0.1808, F1 Score: 0.1808

Epoch 12: val_loss did not improve from 0.00126

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m218s[0m 691ms/step - accuracy: 0.9999 - loss: 2.0833e-04 - val_accuracy: 0.9992 - val_loss: 0.0019 - learning_rate: 2.0000e-04

Epoch 13/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m92s[0m 293ms/step

Epoch 13 Training Precision: 0.1990, Recall: 0.1990, F1 Score: 0.1990

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m11s[0m 285ms/step

Epoch 13 Validation Precision: 0.2028, Recall: 0.2028, F1 Score: 0.2028

Epoch 13: val_loss did not improve from 0.00126

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m217s[0m 686ms/step - accuracy: 1.0000 - loss: 1.3029e-04 - val_accuracy: 0.9992 - val_loss: 0.0019 - learning_rate: 2.0000e-04

Epoch 14/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m93s[0m 296ms/step

Epoch 14 Training Precision: 0.1993, Recall: 0.1993, F1 Score: 0.1993

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m11s[0m 281ms/step

Epoch 14 Validation Precision: 0.2096, Recall: 0.2096, F1 Score: 0.2096

Epoch 14: val_loss did not improve from 0.00126

Epoch 14: ReduceLROnPlateau reducing learning rate to 4.0000001899898055e-05.

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m215s[0m 679ms/step - accuracy: 1.0000 - loss: 4.2404e-05 - val_accuracy: 0.9992 - val_loss: 0.0020 - learning_rate: 2.0000e-04

Epoch 15/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m91s[0m 291ms/step

Epoch 15 Training Precision: 0.2021, Recall: 0.2021, F1 Score: 0.2021

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m11s[0m 284ms/step

Epoch 15 Validation Precision: 0.1928, Recall: 0.1928, F1 Score: 0.1928

Epoch 15: val_loss did not improve from 0.00126

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m211s[0m 670ms/step - accuracy: 1.0000 - loss: 5.6256e-05 - val_accuracy: 0.9992 - val_loss: 0.0019 - learning_rate: 4.0000e-05

Epoch 16/20

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m93s[0m 298ms/step

Epoch 16 Training Precision: 0.1956, Recall: 0.1956, F1 Score: 0.1956

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m11s[0m 277ms/step

Epoch 16 Validation Precision: 0.2080, Recall: 0.2080, F1 Score: 0.2080

Epoch 16: val_loss did not improve from 0.00126

[1m313/313[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m213s[0m 673ms/step - accuracy: 1.0000 - loss: 3.8977e-05 - val_accuracy: 0.9992 - val_loss: 0.0019 - learning_rate: 4.0000e-05

Epoch 16: early stopping

Restoring model weights from the end of the best epoch: 11.

Total Training Time: 3681.97 seconds

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m26s[0m 647ms/step - accuracy: 0.9998 - loss: 3.0504e-04

Test Accuracy: 0.9996

Total Testing Time: 26.87 seconds

[1m40/40[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m11s[0m 285ms/step

Test Precision: 0.9996, Recall: 0.9996, F1 Score: 0.9996